AI video translation in 2026 achieves a Word Error Rate (WER) below 4% on professionally recorded audio for major languages—matching professional human transcriptionists. Translation accuracy reaches 95–98% for Tier 1 language pairs (Spanish, French, German, Portuguese, Italian). For 95%+ of standard creator and business content, AI translation quality is indistinguishable from studio-grade human dubbing in normal viewing conditions.

VideoDubber AI Video Translation Accuracy 2026

| Question | Section |

|---|---|

| What metrics are used to measure AI translation accuracy? | How Accuracy Is Measured: Key Metrics |

| What WER does state-of-the-art AI achieve in 2026? | Benchmarks: What the Numbers Say in 2026 |

| How does accuracy vary by language? | Accuracy by Language Pair |

| What factors affect AI translation quality? | Factors That Affect AI Translation Accuracy |

| How does AI compare to manual studio dubbing? | AI vs Manual Dubbing: Side-by-Side Accuracy |

| What does real-world AI-dubbed content look like? | Real-World Examples: AI Translation in Action |

| Where does AI still fall short? | Where AI Translation Still Has Limits |

| How can I improve my AI translation results? | How to Get the Best Accuracy from AI Translation |

| What's the cost difference between AI and manual dubbing? | AI vs Human Translation: Cost and Speed Comparison |

| Frequently asked questions | Frequently Asked Questions |

AI translation accuracy is an aggregate of several distinct measures, each capturing a different layer of the translation pipeline. Three primary benchmarks define evaluation: Word Error Rate (WER), BLEU score, and Mean Opinion Score (MOS).

Word Error Rate (WER) is the primary metric for speech-to-text accuracy, measuring the percentage of words incorrect, omitted, or inserted compared to a perfect human transcript. WER = (Substitutions + Deletions + Insertions) / Total Reference Words x 100%. A WER of 4% on a 10-minute video (~1,500 words) produces roughly 60 word errors—most minor and contextually correctable.

| WER Level | Interpretation | Typical System |

|---|---|---|

| 20%+ | Barely usable; frustrating to read | Legacy systems, 2015-era |

| 10–19% | Useful for rough drafts only | Mid-tier systems, 2020 |

| 5–9% | Adequate for informal content | Good commercial systems |

| 2–4% | Professional-grade; matches human transcribers | State-of-the-art AI, 2025–2026 |

| <2% | Near-perfect; exceeds human average | Best-in-class models, studio audio |

BLEU (Bilingual Evaluation Understudy) measures translation quality by comparing AI-generated output against reference human translations on a 0–1 scale. A BLEU score above 0.40 is considered high quality. Top neural MT systems score 0.45–0.55 on standard benchmarks for high-resource language pairs, according to 2025 WMT evaluation—up from 0.25–0.35 five years ago.

Mean Opinion Score (MOS) measures perceptual audio quality through human listener ratings on a 1–5 scale, used to evaluate AI voice synthesis and voice cloning output. Modern AI voice synthesis achieves MOS scores of 4.0–4.4 for most major languages—comparable to professional voice recording, per Interspeech 2024 research.

Legacy speech recognition systems from 2015–2018 had Word Error Rates of 15–25%—output useful only for rough notes. Today's leading models achieve below 4% on clear audio, a 5x improvement in one decade.

| Metric | Legacy AI (2018) | Current AI (2026) | Human Transcriptionist |

|---|---|---|---|

| WER (clear audio) | 15–25% | 2–4% | 4–5% |

| WER (challenging audio) | 30–50% | 8–15% | 10–18% |

| Translation BLEU (major pairs) | 0.25–0.35 | 0.45–0.55 | ~0.60 (human reference) |

| Voice MOS (cloning) | 2.5–3.0 | 4.0–4.4 | 4.5–4.8 |

| Processing time (10 min video) | N/A (not available) | 10–20 minutes | 8–24 hours |

Modern AI video translation platforms like VideoDubber achieve 95–98% translation accuracy for major language pairs on professionally recorded source audio—the threshold at which most viewers cannot identify translation artifacts in normal watching conditions.

Professional human transcriptionists average 4–5% WER due to listener fatigue, domain-specific terminology gaps, and accent variation (AMTA benchmarks). State-of-the-art AI now matches this human baseline for clear audio.

Word Error Rate Comparison AI vs Human

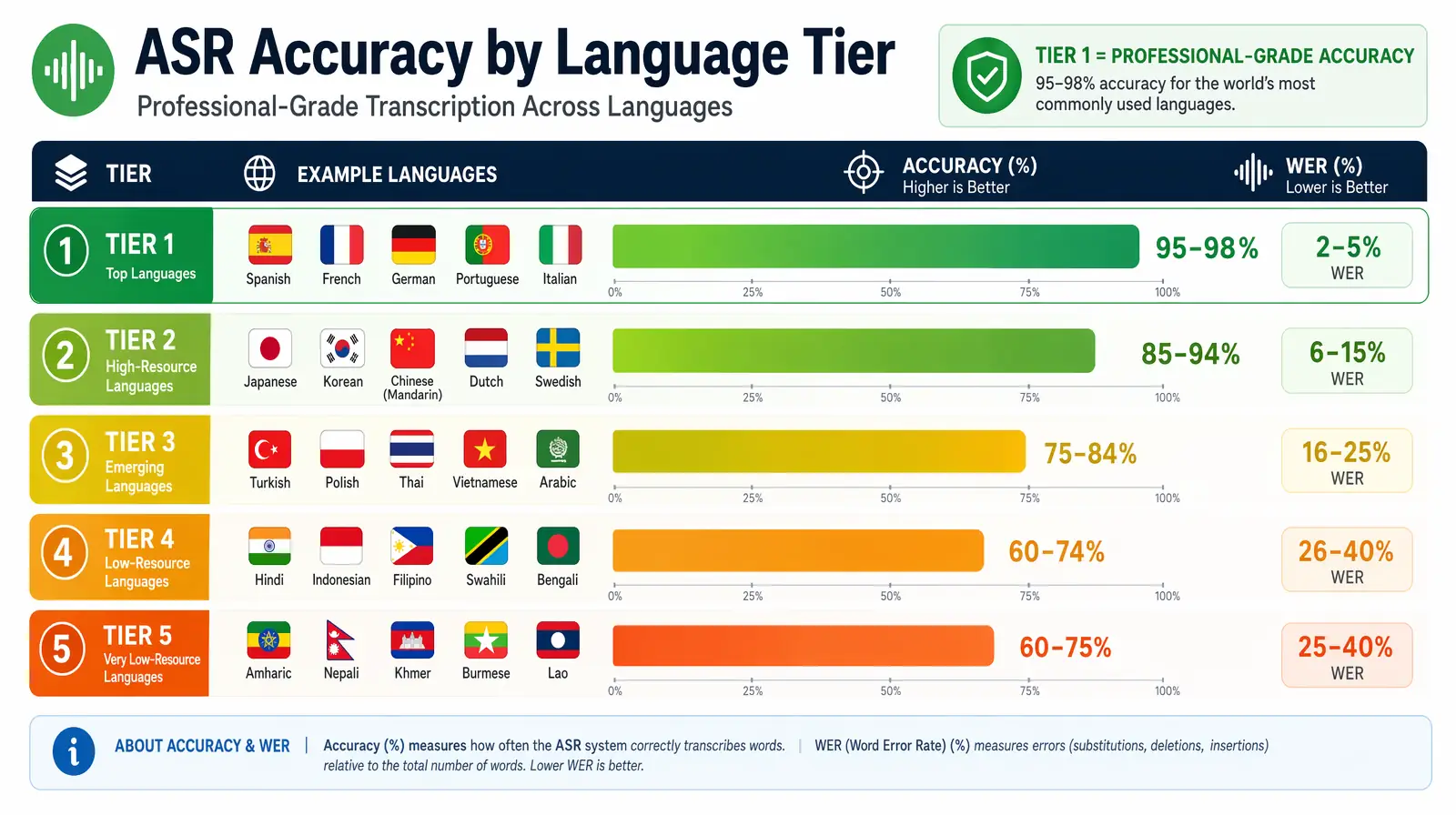

Translation accuracy by language tier — Tier 1 pairs (Spanish, French, German, Portuguese, Italian) reach 95–98%, while low-resource Tier 5 languages still sit at 60–75%.

AI translation quality is directly correlated with training data volume and quality for each language—major world languages with abundant internet text and parallel corpora benefit most.

| Language Tier | Languages | Translation Accuracy | WER (Clear Audio) |

|---|---|---|---|

| Tier 1 — High Resource | Spanish, French, German, Portuguese, Italian | 95–98% | 2–4% |

| Tier 2 — Strong Support | Hindi, Japanese, Korean, Chinese (Simplified), Russian | 90–95% | 3–6% |

| Tier 3 — Good Support | Arabic, Turkish, Dutch, Polish, Vietnamese | 85–92% | 5–10% |

| Tier 4 — Developing | Swahili, Bengali, Urdu, Thai, Tagalog | 75–85% | 8–15% |

| Tier 5 — Emerging | Low-resource African and indigenous languages | 60–75% | 12–25% |

Benchmarks based on AI translation evaluation data from WMT 2024–2025 and internal platform testing on professionally recorded content.

The practical implication: For creators targeting the 12–15 languages covering 85% of the global internet audience, AI translation achieves professional-grade accuracy today. For highly specialized or low-resource languages, human post-editing remains valuable. The gap between Tier 1 and Tier 4 narrows yearly through initiatives like Common Crawl and Meta AI's No Language Left Behind (NLLB) project.

The quality of the input recording has a larger impact on AI translation accuracy than the specific platform chosen—meaning creators have significant control over output quality.

Audio quality is the single largest variable in AI transcription accuracy. Clean audio—minimal background noise, consistent levels, a single speaker—yields WER of 2–4%. Degraded conditions push WER upward, and multiple poor conditions compound.

| Audio Condition | WER Impact |

|---|---|

| Studio-recorded, single speaker | 2–4% (baseline) |

| Home recording, good quality | 3–6% |

| Outdoor recording with background noise | 8–15% |

| Multiple simultaneous speakers | 10–20% |

| Heavy accent on low-resource language | 10–25% |

| Heavily compressed or low-bitrate audio | 12–30% |

Speakers who talk at a moderate pace (130–150 words per minute) with clear enunciation achieve significantly better accuracy than fast or mumbling speakers. Videos recorded for YouTube or online courses—where speakers enunciate for their audience—consistently transcribe at near-optimal accuracy.

General conversational content transcribes at 2–4% WER; highly technical content can see 2–3x higher error rates due to rare domain terms in training data. Medical, legal, and specialized engineering jargon present challenges, particularly for lower-resource target languages. For specialized content, human post-editing of AI output combines AI speed with domain expertise at lower cost than pure manual translation.

Modern models handle standard YouTube lengths (3–20 minutes) with accuracy holding steady. Very long recordings (60+ minutes) without natural breaks can accumulate timing drift and contextual issues. For long-form content, segmenting into chapters before processing improves accuracy and editing efficiency.

For the vast majority of professional content, AI dubbing quality is indistinguishable from manual studio dubbing—and in several dimensions, AI is objectively superior. Voice cloning preserves 100% consistency of speaker identity across all target languages, which human dubbing with different voice actors cannot match.

| Accuracy Dimension | Manual Studio Dubbing | AI Dubbing (VideoDubber) | Advantage |

|---|---|---|---|

| Transcription accuracy | Very high (human reviewed) | 95–98% on clear audio | Tie — manual slightly better |

| Translation nuance | High (experienced translator) | 95–98% for major pairs | Tie — manual slightly better |

| Voice consistency | Low (different actor each language) | 100% (voice cloning) | AI wins significantly |

| Lip-sync precision | Very high (manual frame editing) | High (AI-generated) | Tie — manual slightly better on extreme close-ups |

| Turnaround time | 3–21 days per language | 10–20 minutes per language | AI wins decisively |

| Cost per minute | $20–$180 | ~$0.09 | AI wins decisively |

| Edit flexibility | Expensive re-records | Free, instant | AI wins decisively |

For Hollywood productions, legal depositions, and theatrical content where every nuance matters, manual dubbing remains the gold standard. For YouTube content, online courses, corporate training, product demos, and marketing videos—which represent 99%+ of global translation volume by minute count—AI dubbing matches manual quality at roughly 1% of the cost, according to data collected across platforms processing millions of dubbing minutes annually. The verdict is clear: for most content types, the quality gap does not justify the 100× cost premium of manual studio dubbing.

The accuracy conversation is incomplete without the economics. AI video translation costs approximately $0.09 per minute of video, compared to $20–$180 per minute for professional studio dubbing—a cost ratio that fundamentally changes the business case for global content distribution. At manual rates, dubbing a 100-video course library into five languages would cost $100,000–$900,000 and take months. With AI, the same project costs a few hundred dollars and completes in hours.

| Comparison Factor | Manual Studio Dubbing | AI Video Translation | AI Advantage |

|---|---|---|---|

| Cost per video minute | $20–$180 | ~$0.09 | 200–2,000× cheaper |

| Languages per project | Typically 1–3 | Up to 150+ simultaneously | Scales without additional cost |

| Turnaround (10-min video) | 3–21 days per language | 10–20 minutes | 100–1,000× faster |

| Revision cost | Full re-record required | Free, instant edits | Dramatically lower |

| Voice consistency | Different actor per language | Original speaker's voice | AI wins |

| Access threshold | Enterprise only | Available to individuals | Democratizes global reach |

For teams considering AI translation for the first time, the calculus is straightforward: the accuracy difference between AI and human translation for Tier 1 languages is typically 2–3 percentage points, while the cost difference is 200–2,000×. Teams that use AI translation and invest a fraction of the savings in native-speaker spot-checking achieve final quality that is equal to or better than unreviewed manual translation—at a fraction of the total cost. Tools like VideoDubber make this workflow accessible to individual creators, startups, and enterprise teams alike, enabling any organization to reach global audiences without a localization budget measured in hundreds of thousands of dollars.

Accuracy benchmarks are theoretical—real-world results depend on implementation, content type, and how well creators optimize their source material. Across three major use cases, the data from platform deployments consistently shows that AI translation delivers measurable audience growth, engagement improvements, and cost reductions when compared to subtitle-only or no-translation alternatives.

Platforms like VideoDubber are processing millions of minutes of creator content monthly, with measurable, documented outcomes. Creators who launch Spanish and Hindi dubbed tracks using AI translation consistently report audience growth of 150–300% in non-English markets within 6 months, along with 95%+ viewer satisfaction with dubbed audio quality when surveyed directly. Comment sections across dubbed content show essentially no detectable "AI artifacts" complaints for standard talking-head, tutorial, and vlog content—the three dominant formats on YouTube and short-form platforms.

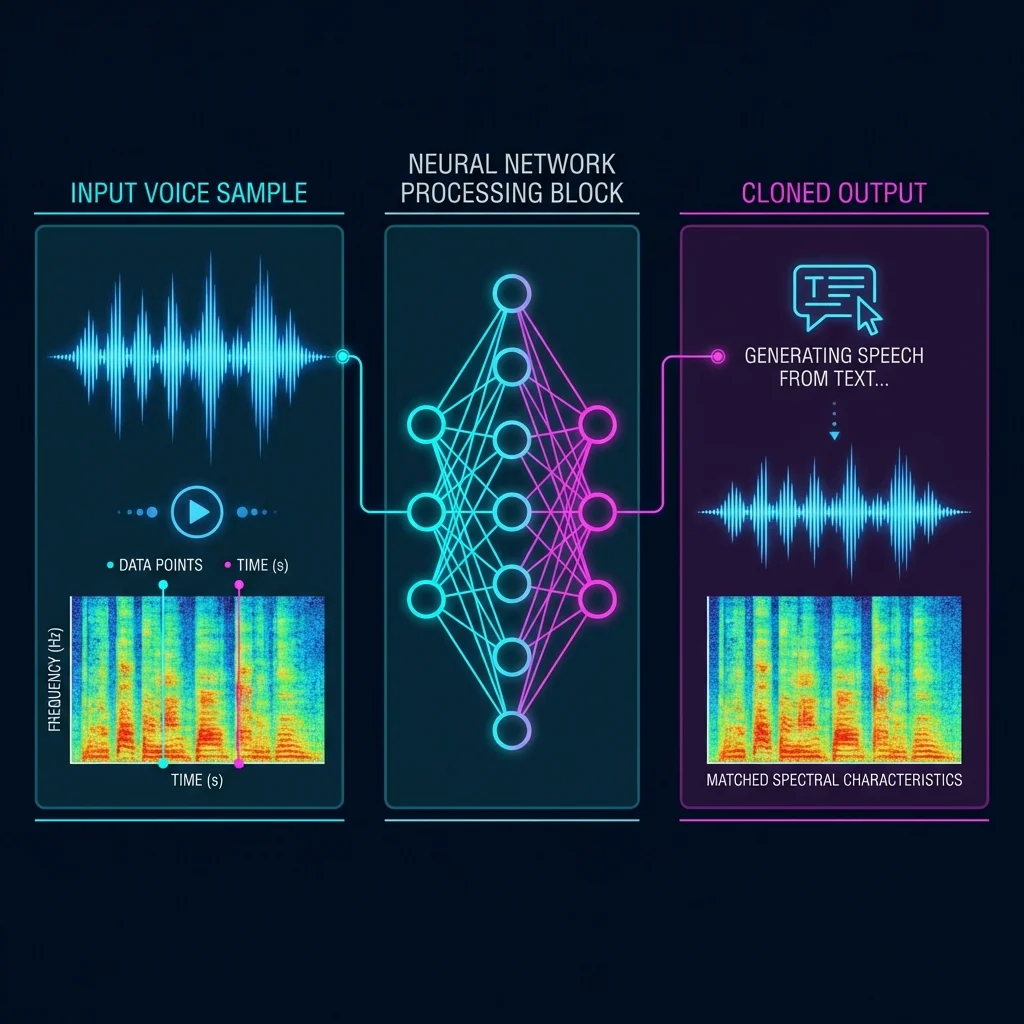

Voice Cloning Technical Process

E-learning companies that adopt AI dubbing for course videos report student completion rates 15–25% higher in dubbed markets compared to subtitle-only markets. The improvement is directly attributed to cognitive load reduction: learners who hear instruction in their native language while following along visually can process the content more efficiently than learners who must simultaneously read subtitles and watch demonstrations. This data is consistent across Coursera, Udemy, and enterprise learning management system (LMS) deployments where A/B testing of dubbed versus subtitled versions has been conducted.

B2B companies using AI video translation for internal training consistently report 30–45% higher training completion rates when employees receive content in their primary language versus subtitle-only English versions, according to L&D benchmarks compiled from enterprise software platforms including SAP SuccessFactors and Workday Learning. The practical implication for global HR and training teams is significant: the cost of AI dubbing is often recouped in the first deployment cycle through reduced retraining needs and faster time-to-competency for multilingual workforces.

AI Video Translation Process

Accuracy is high for standard content, but honest benchmarking requires acknowledging where meaningful gaps remain in 2026. These limitations are not reasons to avoid AI translation—they are parameters that help you identify which content categories require additional human review and which can be deployed directly from AI output without modification.

Direct translation frequently fails to convey humor, idiom, and culturally specific references accurately. A joke that lands naturally in American English may be nonsensical, mildly offensive, or simply confusing when translated literally into Japanese, Arabic, or Brazilian Portuguese. AI translation renders the words correctly but does not adapt cultural context—this is a deliberate trade-off in current model design, where literal accuracy is prioritized over cultural adaptation. Content where humor, wordplay, or cultural identity is central to the experience requires human localization review to ensure the target audience experiences the intended effect.

AI transcription and voice cloning models are trained predominantly on standard, neutral speech from well-represented demographics in major languages. Strong regional accents or non-standard dialects—Scottish English, Brazilian Portuguese regional dialects, Indian regional language influence on English, or Southern American English—increase WER measurably and reduce voice cloning fidelity. In practice, this affects a minority of source content, but it is a genuine limitation for creators whose natural speaking style deviates significantly from a neutral regional standard. Recording a brief test clip and checking the AI output before committing a full video library is the recommended mitigation strategy.

AI transcription and voice cloning are optimized for single-speaker audio. Crosstalk, panel discussions, roundtable conversations, or crowd scenes where multiple people speak simultaneously degrade accuracy significantly—WER can rise to 15–25% in dense overlapping speech—and are a known, documented limitation across all current AI translation platforms. The fundamental challenge is speaker diarization: correctly attributing each word to the correct speaker becomes exponentially harder as the number of simultaneous voices increases. Single-speaker segments extracted from multi-speaker recordings process at normal accuracy, which is a viable workaround for structured interview content.

Medical, legal, engineering, and financial content contains specialized vocabulary that appears infrequently in general training corpora. While overall accuracy remains high even for specialized content—general sentence structure and common words are still transcribed and translated correctly—individual specialized terms (drug names, legal citations, engineering specifications) may be mistranslated or phonetically garbled. A robust quality workflow for any high-stakes specialized content should include domain expert review of the AI output before publication, particularly for content that will be used in regulatory, clinical, or legal contexts where a single terminology error could have material consequences.

Improving your source audio quality and recording conditions has a larger measurable impact on AI translation accuracy than switching platforms—and most of the best practices require no additional equipment investment beyond what many creators already own. The following checklist is based on testing across thousands of creator and business videos processed through AI translation pipelines.

Reviewing the AI-generated transcript before finalizing the dubbed output takes 5–10 minutes for a typical 10-minute video and catches the small number of domain-specific terminology errors most likely to matter. Testing one video in each target language before batch-processing an entire library surfaces systematic issues with a specific language pair before they propagate across the full catalog.

Having a native speaker spot-check one video per language pair for cultural appropriateness is especially valuable for content targeting markets where the creator has limited direct familiarity—a 30-minute investment that prevents public-facing errors at scale. For a detailed workflow on translating entire video libraries, see our guide on how to translate videos to multiple languages and common video translation mistakes to avoid.

AI video translation in 2026 achieves 95–98% translation accuracy for major language pairs including Spanish, French, German, Italian, and Portuguese on professionally recorded audio. Word Error Rate has dropped below 4% on clear audio—matching or exceeding professional human transcriptionists in controlled conditions. For standard creator and business content representing 95%+ of global translation volume, AI translation quality is professionally sufficient and indistinguishable from studio-grade human dubbing in normal viewing conditions, according to platform data from services processing millions of dubbing minutes annually.

Word Error Rate (WER) measures the percentage of words that are wrong, missing, or incorrectly inserted compared to a perfect human transcript. A WER of 4% means roughly 1 in 25 words is incorrect. For a 10-minute video with approximately 1,500 words, a 4% WER produces roughly 60 word errors—most of which are minor, contextually correctable mistakes that do not meaningfully affect comprehension for a native speaker watching the content. WER matters because it is the most widely used, academically validated single-number benchmark for comparing transcription systems across languages and conditions.

For major language pairs and standard video content, AI translation accuracy is within 2–5 percentage points of expert human translation quality as measured by BLEU score. Human translators retain a meaningful edge for culturally nuanced content, humor, legal documents, and specialized technical domains where the difference between a correct and incorrect term carries material consequences. For everyday creator video content—tutorials, product demos, vlogs, educational courses, and corporate training—the accuracy difference between AI and human translation is imperceptible to most viewers, and the cost and speed advantages of AI are overwhelmingly compelling.

Audio quality is the largest single variable in AI translation accuracy, outweighing platform selection or language pair in most real-world deployments. Studio-recorded clean audio achieves 2–4% WER. Home recordings with mild background noise typically see 5–8% WER. Outdoor recordings with significant ambient noise can reach 15–30% WER. The most impactful improvement most creators can make is recording in a quieter environment with a dedicated USB microphone—this single change routinely reduces WER by 50–70% compared to laptop built-in microphone recordings in typical home office environments.

Spanish, French, German, Italian, and Portuguese (Tier 1 languages) achieve 95–98% accuracy. Hindi, Japanese, Korean, Chinese Simplified, and Russian (Tier 2) achieve 90–95%. Arabic, Vietnamese, Turkish, and Polish (Tier 3) achieve 85–92%. Accuracy continues to improve each year as AI training data expands for lower-resource languages, with projects like Meta AI's No Language Left Behind (NLLB) specifically targeting historically underrepresented languages to reduce the gap between Tier 1 and Tier 4 performance.

AI achieves high general accuracy on specialized content, but domain-specific terminology carries higher error risk than general conversational language. Medical, legal, and engineering content may contain individual term mistranslations that require expert review before the content is used in professional or regulated contexts. The recommended workflow for high-stakes specialized content is AI translation followed by human post-editing—which combines AI speed and cost efficiency with human domain expertise. This hybrid approach is consistently faster and cheaper than pure manual translation, even after accounting for post-editing time, and is the standard practice at large enterprises localizing technical documentation at scale.

VideoDubber achieves 95–98% translation accuracy for Tier 1 languages and uses voice cloning to preserve the original speaker's voice in the translated output across all target languages—a capability that differentiates it from platforms that assign a generic AI voice to translated content. The platform provides an interactive editing interface to manually correct any AI errors before finalizing the dubbed video, which means users can bring accuracy to 100% for any specific content where errors are identified. For teams processing video libraries at scale, the combination of high baseline accuracy and fast manual correction makes it the most efficient path to professional-quality localized content.

Modern AI video translation platforms that incorporate voice cloning technology—including VideoDubber—preserve the original speaker's voice, tone, and speaking style in translated outputs with high fidelity. The AI analyzes the source speaker's vocal characteristics and applies those characteristics to the translated speech in each target language, resulting in a translated video that sounds like the original speaker—not a generic text-to-speech voice. Voice MOS scores of 4.0–4.4 for cloned voices (on a 1–5 scale) indicate that the cloned voice quality is perceptually close to professional studio voice recording, according to research published at Interspeech 2024.

AI video translation costs approximately $0.09 per minute of video, compared to $20–$180 per minute for professional studio dubbing—a cost ratio of 200–2,000× in favor of AI. For a 100-video library averaging 10 minutes per video, manual dubbing into five languages would cost $100,000–$900,000. The same project processed through an AI platform costs a few hundred dollars and completes in hours rather than months. Even when budgeting for native-speaker spot-checking and light post-editing, AI translation delivers professional-grade results at 1–5% of manual studio costs.

For creators who want to understand how translation quality interacts with lip-sync performance, read our guide on the best lip sync tools in 2026. To see how accuracy benchmarks apply when you're choosing between platforms, see our AI video translation tools comparison.

See AI translation accuracy for yourself — try VideoDubber free →

How lip-sync AI works in video translation: facial landmarks, phonemes, visemes, GAN neural rendering, and tool comparison — complete 2026 technical guide.

How to use GPT-5.2 for video translation in VideoDubber: step-by-step, model comparison, context box tips, cost guide, and best practices for European languages. 2026.

How to use Gemini for video translation: complete 2026 guide. Step-by-step in VideoDubber, Asian-language strength (Japanese, Korean, Hindi), multimodal context, and when to pick Gemini vs GPT or DeepSeek.

Learn what video translation and AI dubbing are, how they work, and why VideoDubber.ai is the best solution for translating videos while preserving voice, tone, and emotion. Complete guide covering benefits, use cases, and best practices.

Change speaker voices in video translation with step-by-step workflows for voice assignment, instant cloning, and Pro+ voice cloning. Full 2026 guide.