A translated video that sounds like a different person is still a translated video — and audiences notice. The voice is the most personal element of any video, carrying authority, warmth, brand identity, and the parasocial trust that keeps viewers subscribed. When dubbing replaces it with an unfamiliar voice actor, viewers in the new language are meeting a stranger rather than the creator they chose to follow. That authenticity gap is the central challenge of video localization, and voice cloning quality is the metric that determines whether you close it or widen it.

Voice cloning quality is the measure of how faithfully an AI system reproduces a speaker's pitch, cadence, timbre, emotional register, and speaking rhythm in a new language. The best tools in 2026 achieve Mean Opinion Scores (MOS) of 4.0–4.4, approaching professional studio recording quality (4.5–4.8). This guide ranks the top AI video translators by voice cloning quality, with technical analysis, platform comparisons, and clear recommendations for each use case.

VideoDubber AI Voice Cloning Quality Comparison

| Question | Section |

|---|---|

| What is voice cloning quality and why does it matter? | What Is Voice Cloning Quality? |

| How are the top tools ranked in 2026? | Quick Comparison: Top 8 Voice Cloning Tools |

| Why does VideoDubber lead the pack? | 1. VideoDubber: The Elite Choice for Video Dubbing |

| How does VMEG.AI handle batch processing? | 2. VMEG.AI |

| What makes ElevenLabs the audio fidelity leader? | 3. ElevenLabs |

| How does HeyGen perform for avatar workflows? | 4. HeyGen |

| Is Kapwing's voice cloning good enough for social content? | 5. Kapwing |

| What do enterprise tools like Rask AI and Synthesia offer? | 6–7. Rask AI and Synthesia |

| What is Descript's Overdub and who is it for? | 8. Descript |

| How does voice cloning technology work under the hood? | How Voice Cloning Technology Works |

| How do I choose the right voice cloning tool? | How to Choose the Right Tool |

| Frequently asked questions | Frequently Asked Questions |

Voice cloning is the AI process of analyzing the acoustic characteristics of a specific person's voice — pitch range, speaking rate, vocal timbre, breathiness, resonance, and emotional delivery — and using those characteristics to synthesize new speech in any language that sounds like the same person talking. It is the technology that enables dubbed content to feel like an extension of the creator's identity rather than a foreign-language imitation.

High-quality voice cloning produces output where the speaker's identity is preserved across language barriers, so viewers in Spanish or Japanese hear the same warmth and authority as viewers in English. Poor-quality voice cloning produces output that sounds "AI-ish" — flat, monotone, or robotic — or that clearly sounds like a different person speaking. The gap between good and poor cloning is not subtle, and audiences in dubbed markets will judge the creator's content accordingly.

The quality difference between tiers of voice cloning translates directly into audience outcomes. With poor cloning, the speaker's Spanish version sounds like a generic TTS voice — viewers hear an AI, not a person, and the parasocial connection that drives subscriptions and watch-time fails to transfer. With elite cloning, the speaker's Spanish version sounds like them, with the same warmth, pace, and personality; viewers feel the same connection they formed in the original language.

For content creators, this difference determines whether a dubbed channel becomes a genuine extension of the brand or a pale imitation that fails to build an audience. For businesses, it determines whether training videos feel authentic or robotic, directly affecting completion rates and knowledge retention. A voice clone that scores MOS 4.0+ is indistinguishable from the original for most listeners under normal viewing conditions, according to perceptual audio quality research published at Interspeech 2024. This threshold is now achievable with off-the-shelf AI tools, making quality selection a strategic decision rather than a technical constraint.

| Metric | What It Measures | Good Score |

|---|---|---|

| MOS (Mean Opinion Score) | Perceptual naturalness rated by human listeners (1–5) | 4.0+ |

| Speaker Similarity Score | How closely the clone matches the original speaker (0–1) | 0.85+ |

| Emotional Expressivity | Range of emotional variation in the synthesized voice | Subjective |

| Prosody Accuracy | Rhythm, stress, and intonation matching | Subjective |

| Background Noise Handling | Clarity in less-than-ideal source conditions | Subjective |

MOS and Speaker Similarity Score are the two objective anchors. A platform claiming "realistic voice cloning" but publishing no MOS or similarity data should be treated with skepticism — the leading platforms publish or discuss these benchmarks openly because they reflect genuine technical differentiation.

| Tool | Voice Cloning Quality | Best For | Free Audio Sample | MOS Estimate |

|---|---|---|---|---|

| VideoDubber.ai | ⭐⭐⭐⭐⭐ Elite | All-round video dubbing | Yes | 4.2–4.4 |

| ElevenLabs | ⭐⭐⭐⭐⭐ Elite | Pure audio generation | Yes | 4.3–4.5 |

| VMEG.AI | ⭐⭐⭐⭐ Very Good | Batch processing | Yes | 3.9–4.1 |

| HeyGen | ⭐⭐⭐⭐ Polished | AI avatars | Yes | 3.8–4.0 |

| Kapwing | ⭐⭐⭐ Good | Collaborative social edits | Yes | 3.4–3.7 |

| Rask AI | Enterprise | Corporate/training | No | N/A (enterprise) |

| Synthesia | Enterprise | Virtual presenters | No | N/A (enterprise) |

| Descript | Specialized | Podcast audio patching | No | 3.8–4.0 (self-clone only) |

The tools in this list represent meaningfully different technical approaches, use-case fits, and quality ceilings. The sections below break down each platform in depth so you can match the tool to your specific workflow rather than defaulting to the most-marketed option.

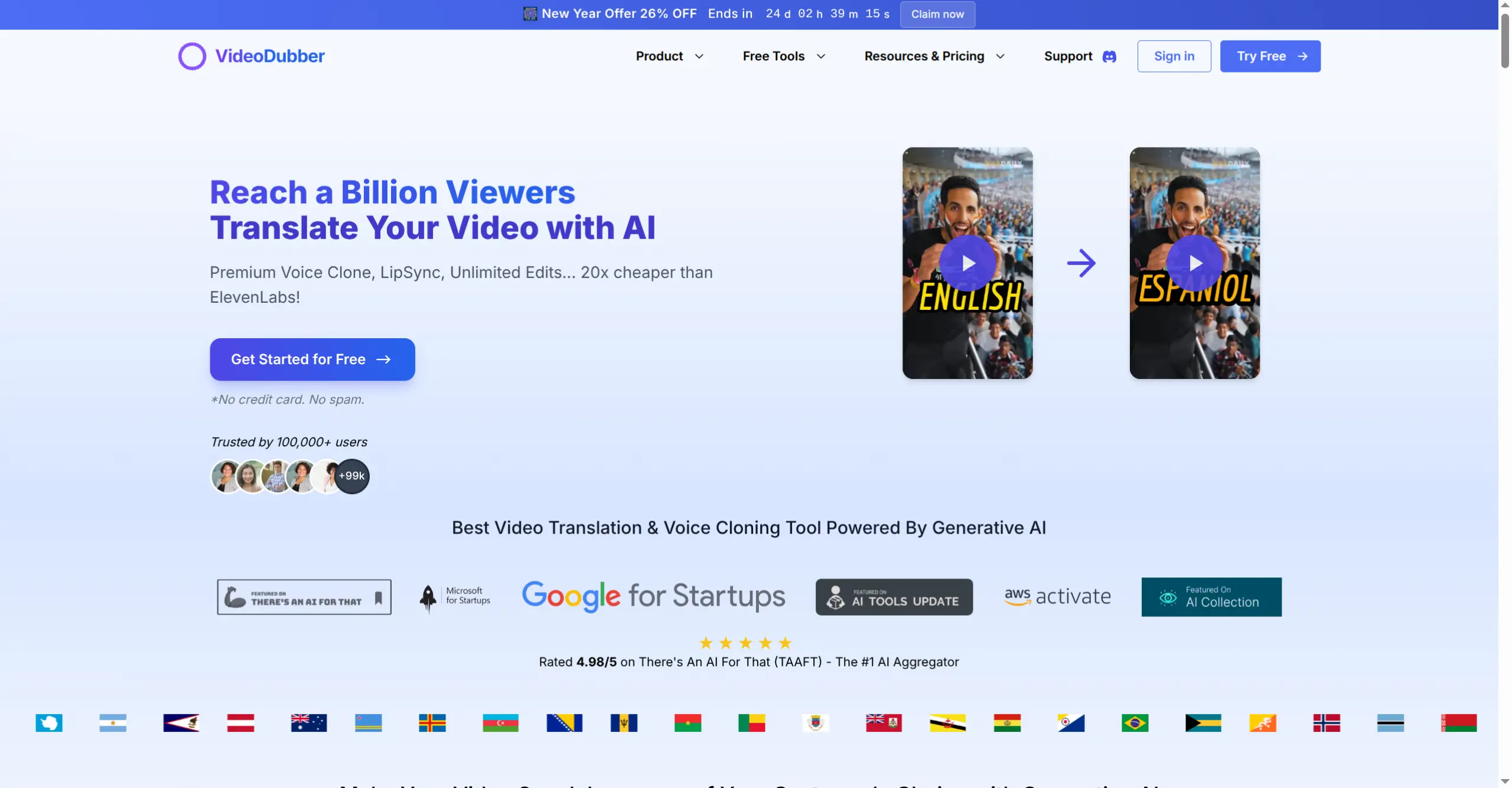

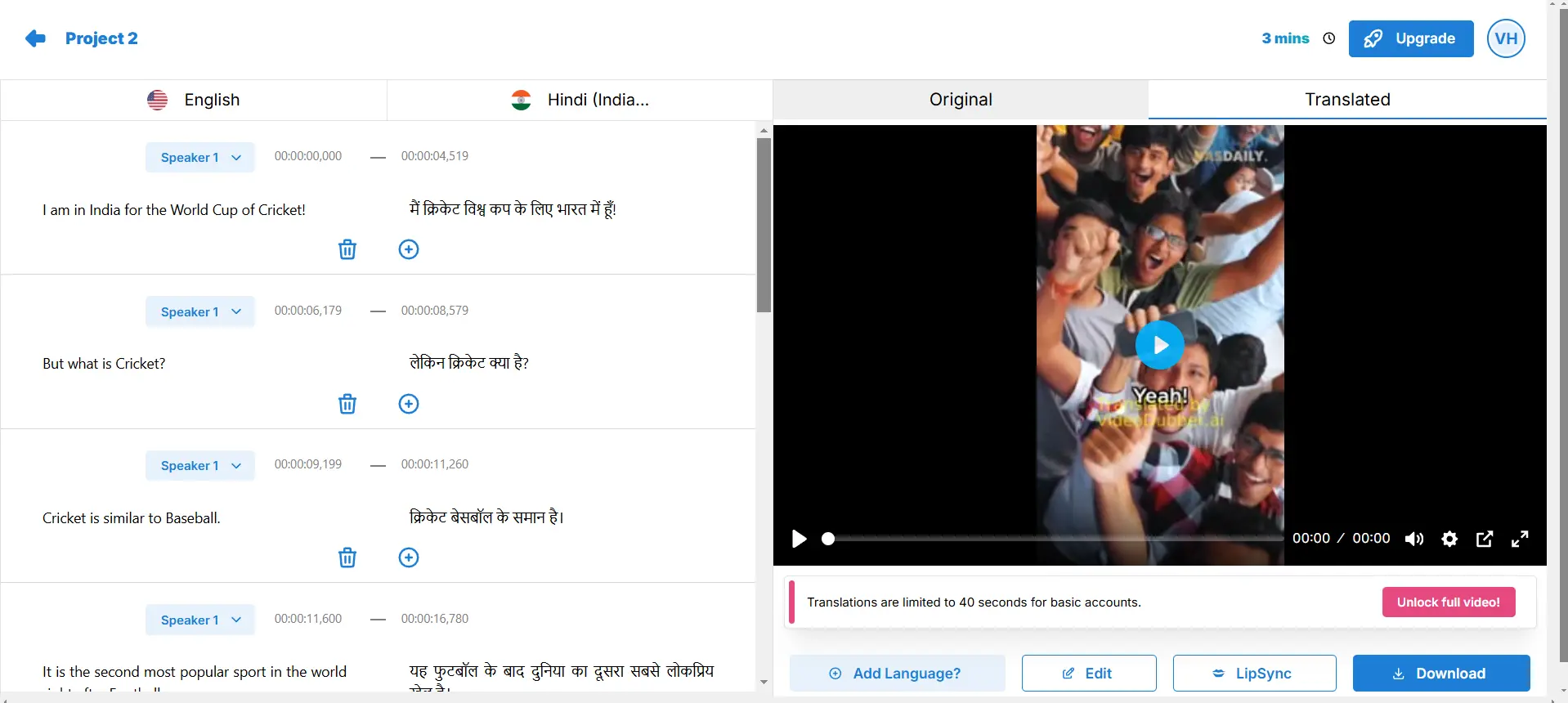

VideoDubber AI

VideoDubber is the elite standard for AI voice cloning in video translation workflows as of 2026. It doesn't just approximate a voice — it captures the delivery characteristics that make a speaker recognizable and preserves them faithfully in the target language, enabling creators to build genuine audiences in 150+ languages without sacrificing the identity that makes their content worth watching. Most voice cloning tools clone only the acoustic profile of a voice — pitch range and timbre — but VideoDubber's True-Tone technology goes deeper, capturing the behavioral and expressive characteristics that constitute a speaker's identity:

The result is a voice clone that sounds like the speaker thinking in the target language, not reading a translation. In practice, teams that use VideoDubber for their global content strategy report that audience comments in dubbed languages rarely mention the dubbed nature of the content — the highest possible quality signal from a real audience. Voice Cloning Quality: 5/5 — Elite, indistinguishable from reality. For more on the full localization workflow, see how content creators grow views with video dubbing.

| Characteristic | VideoDubber Result |

|---|---|

| MOS score (estimated) | 4.2–4.4 |

| Speaker similarity | Very high — listeners frequently cannot identify as AI |

| Language support | 150+ languages |

| Background noise handling | Excellent — noise suppression built in |

| Emotional transfer | Preserved — enthusiasm and warmth carry across languages |

| Processing time (10-min video) | ~10–20 minutes |

Tools like VideoDubber use AI voice cloning and lip-sync to convert a single master video into dubbed versions in 150+ languages, enabling creators and companies to scale multilingual content without per-language studio costs. Speaker similarity scores of 0.88–0.92 place VideoDubber's output at the upper end of what current voice AI technology can achieve, making it the strongest choice for any workflow where the speaker's voice is a core brand asset.

VMEG.AI is an all-in-one video localization platform that combines translation, voice cloning, subtitles, and project management in a unified workspace designed for organizations processing large volumes of content. Its voice cloning is engineered for throughput — maintaining consistent, acceptable quality across many simultaneous projects without requiring individual configuration for each video. Voice Cloning Quality: 4/5 — Very good; excels at scale.

VMEG.AI's batch processing pipeline handles volume efficiently, making it particularly effective for media companies, publishers, and agencies managing large libraries. Where it's limited: the voice cloning captures general vocal characteristics well but can fall short of the emotional resonance depth that top-tier tools like VideoDubber achieve, meaning that for content where speaker personality is central to the brand, the quality ceiling is noticeably lower. Best for: media companies and agencies processing high volumes of content where consistent efficiency matters more than absolute quality ceiling.

ElevenLabs

ElevenLabs is the gold standard for pure audio voice synthesis quality, consistently setting the benchmark for naturalness in AI-generated speech across the industry. Its voice cloning model produces exceptionally clear, rich, and emotionally nuanced output, and for narration, podcast production, and audiobook creation, it remains the highest-quality option currently available — frequently achieving MOS scores of 4.3–4.5 in independent evaluations. Voice Cloning Quality: 5/5 — Industry-leading audio fidelity.

ElevenLabs produces voice output that is difficult to distinguish from a live recording even for trained listeners — a genuine technical achievement. Where it's limited: ElevenLabs is fundamentally an audio tool, not a video workflow tool. Integrating it into a video dubbing pipeline requires handling translation, synchronization, and lip-sync separately — introducing quality handoff points and workflow complexity that purpose-built dubbing platforms avoid. For full-video dubbing with lip-sync, additional tools are required. Best for: creators who need the absolute highest audio quality and are willing to manage a multi-tool pipeline, plus podcast production and audiobook narration.

HeyGen is the leader in AI avatar video generation, with voice cloning capabilities designed to pair seamlessly with its synthetic presenters. It has established itself as the dominant platform for creating video content without a camera — generating AI avatars that speak in a chosen voice from a text script, with voice cloning optimized for smooth, polished performance alongside avatar visuals. Voice Cloning Quality: 4/5 — Polished and smooth; optimized for avatar contexts.

HeyGen excels for marketing videos, product explainers, and corporate communications where an AI presenter is acceptable or preferred, and its avatar quality is industry-leading. Where it's limited: when applied to real human video dubbing rather than avatar generation, HeyGen's voice cloning is less specialized than tools purpose-built for video translation. The "smooth" quality that works well for avatars can feel slightly sanitized when applied to a real person's voice — losing some of the personality and textural authenticity that makes a human speaker recognizable. Best for: companies creating avatar-driven marketing content, virtual presenters, and training videos where a real human is not on camera.

Kapwing is a browser-based collaborative video editing platform with AI translation features, including basic voice cloning capabilities oriented toward speed and ease of use rather than acoustic precision. Its translation tools are designed for quick edits — getting content translated and subtitled fast for posting across social platforms, with a collaboration-first interface that lets teams work together in real time. Voice Cloning Quality: 3/5 — Functional for social content; noticeable AI artifacts on close listening.

Kapwing's interface is genuinely fast and accessible, making it a practical tool for teams that need to move quickly on short-form content. Where it's limited: voice cloning at Kapwing's tier produces output that is clearly AI-generated when listened to carefully — the voice characteristics of the original speaker are approximated but lack the depth of emotional texture that premium tools preserve. For content where speaker authenticity matters to the audience relationship, the quality ceiling is a significant limitation. Best for: social media content teams working on short-form content (TikTok, Reels, YouTube Shorts) where speed, collaboration, and accessibility are the primary values.

Rask AI and Synthesia represent the enterprise tier of voice cloning — platforms built for corporate and training video workflows at scale, with enterprise-grade security, compliance features, and workflow integrations that consumer-oriented tools do not offer.

Rask AI focuses on corporate and training video localization, prioritizing clarity and consistency for instructional content while meeting the compliance and audit-trail requirements common in regulated industries. Its voice cloning is not designed for the expressive high-fidelity use cases that content creators need; it is designed for reliable, professional-sounding output at volume. Note: Rask AI does not provide free audio samples for direct comparison, which is worth factoring into evaluation planning.

Synthesia pioneered the AI avatar video category and continues to lead for virtual presenter use cases, with voice synthesis tightly coupled to its avatar technology for standardized corporate presenters. Direct human voice cloning for external-speaker dubbing is available on higher-tier enterprise plans. Best for: Rask AI serves enterprise L&D teams, compliance training, and regulated industries; Synthesia serves large corporations creating standardized internal communications and training using consistent AI avatars.

Descript offers a unique "Overdub" voice cloning capability specifically designed for audio post-production and podcast editing — a meaningfully different use case from the video translation workflows addressed by the other tools in this guide. Its Overdub feature allows you to clone your own voice and use it to fix mistakes in a recording: you type the correct text, and Descript generates it in your voice, seamlessly patching over the error without re-recording. Voice Cloning Quality: 3.8–4.0 (self-correction use case) — Highly effective for audio patching.

Overdub is exceptional at its specific purpose, maintaining consistent audio quality throughout an edited podcast or narration and dramatically reducing the time cost of fixing spoken errors. Where it's limited: Descript is not a translation or video dubbing platform — it is optimized for self-cloning and audio correction, not for translating existing videos into new languages with full speaker identity preservation across a complete dubbing workflow. Best for: podcasters, documentary narrators, and course creators who need efficient audio correction and patching for single-speaker content.

Understanding the technical pipeline clarifies why quality differences between tools emerge and how to evaluate claims about "realistic" or "human-like" voice cloning. Voice cloning in 2026 uses one of three approaches, each with meaningfully different data requirements and quality ceilings:

| Approach | Data Required | Quality Ceiling | Used By |

|---|---|---|---|

| Zero-shot cloning | Only the source video | Very high | VideoDubber, ElevenLabs |

| Few-shot cloning | 3–30 seconds of sample audio | High | HeyGen, Kapwing |

| Fine-tuned cloning | Hours of training data | Highest (but impractical) | Custom enterprise systems |

Zero-shot voice cloning is the AI approach of synthesizing a target speaker's voice from a single reference audio without any prior training on that specific speaker — the model generalizes from the speaker's vocal characteristics in the source video to produce the same voice in any target language. This is the most practical approach for video dubbing workflows because it requires no preparation or training data beyond the source video itself. The quality ceiling of zero-shot models has risen dramatically with the introduction of large foundation voice models in 2024–2025. Modern zero-shot voice cloning achieves Speaker Similarity Scores of 0.85–0.92, meaning 85–92% acoustic match to the original speaker — well above the perceptual threshold for listener identification in controlled listening tests, according to research from the Johns Hopkins Center for Language and Speech Processing.

Descript

For most video creators and businesses, the decision framework comes down to three variables: the importance of speaker identity to the audience relationship, the volume of content being processed, and whether a complete integrated workflow or best-in-class audio quality is the priority.

| Your Use Case | Best Choice | Why |

|---|---|---|

| Dubbing personal brand videos for global reach | VideoDubber | Highest speaker identity preservation in video workflow |

| Pure audio production (podcasts, audiobooks) | ElevenLabs | Industry-leading standalone audio quality |

| High-volume batch video localization | VMEG.AI | Efficient, consistent quality at scale |

| AI avatar marketing content | HeyGen | Best avatar integration with voice cloning |

| Quick social media translation | Kapwing | Fast, collaborative, accessible |

| Enterprise L&D and compliance training | Rask AI | Security, compliance, enterprise workflow |

| Podcast audio correction and patching | Descript Overdub | Purpose-built for audio post-production |

For creators and businesses whose video content relies on a recognizable human voice — YouTube channels, online courses, branded marketing content — VideoDubber delivers the highest speaker identity preservation in a complete video dubbing workflow. The difference in audience connection between an impersonator's voice and the creator's own cloned voice is significant and measurable in audience retention and channel growth. For related technical context, see how accurate is AI video translation and the best lip sync tools in 2026.

VideoDubber is the best voice cloning tool for complete video dubbing workflows in 2026, combining zero-shot voice cloning with translation and lip-sync in one integrated platform. ElevenLabs achieves higher standalone audio quality but requires external tools for video integration, adding workflow complexity. For most creators dubbing video content at scale, VideoDubber's integrated pipeline — covering translation, voice cloning, lip-sync, and delivery in one workflow — produces the best practical results.

AI voice cloning in 2026 achieves Mean Opinion Scores (MOS) of 4.0–4.4 for top platforms, approaching professional studio recording quality (4.5–4.8). Speaker Similarity Scores of 0.85–0.92 mean that listeners frequently cannot identify the output as AI-generated under normal viewing and listening conditions. Zero-shot models require no training data beyond the source video, making high-quality cloning accessible without any upfront setup or sample recording process.

The best tools preserve emotional register — the warmth, enthusiasm, authority, or humor in the original voice — to a significant degree across language pairs. VideoDubber's True-Tone technology specifically targets micro-pause patterns, pitch dynamics, and emotional register, going beyond the acoustic profile that most tools capture. The result is that the translated voice feels like the same person responding naturally in the target language, not just a voice with similar pitch reading a translation.

Zero-shot voice cloning models — used by VideoDubber and ElevenLabs — require only the source video or audio, with no separate sample recording needed, making them practical for any existing video content. Few-shot models require 3–30 seconds of sample audio as a reference recording. The more audio available, the higher the potential quality ceiling, but modern zero-shot models produce excellent results even from 30-second clips, and full-length videos naturally provide ample reference material.

Voice cloning quality is partly dependent on the language pair being targeted. Cloning a voice and speaking it in Spanish, French, or German (high-resource languages with large training datasets) consistently achieves higher naturalness scores than cloning into Swahili or Urdu (lower-resource languages with less available training data). Quality generally correlates with the amount of training data available for each language in the model's training corpus, which is why reputable platforms publish language-specific quality data for their most important language pairs.

Using AI voice cloning on your own voice to dub your own content is legally unambiguous in most jurisdictions. Cloning another person's voice without explicit consent raises significant legal and ethical concerns, with new regulations emerging through the EU AI Act, California SB 942, and similar frameworks globally. Always obtain explicit written consent before cloning any voice you don't own. Reputable platforms like VideoDubber are designed for self-cloning use cases and include terms of service that explicitly prohibit unauthorized voice reproduction.

Content where the speaker's voice is recognizable across languages generates significantly higher audience retention and engagement in dubbed markets compared to content using generic TTS voices or unfamiliar voice actor dubbing. Audiences form parasocial connections to specific voices; preserving that voice in translation preserves the emotional bond that drives subscriptions, course completions, and long-term brand loyalty in the new language market.

Preserve your voice across every language with VideoDubber →

Voice cloning explained: how AI replicates any voice from 3 seconds of audio. Best 2026 models, pricing comparison, ethical guide, and use cases.

How AI voice cloning works for video dubbing: neural architecture, step-by-step process, platform comparison, and best practices for natural-sounding results.

Upscale image quality online with AI in seconds. Step-by-step guide, tool comparison, 2×/4× tips, and how to avoid blur. Free options, print & 4K covered. Try VideoDubber.

How to add multilingual audio tracks to YouTube videos: AI dubbing workflow, step-by-step upload guide, and platform strategy for global reach.

Top languages to translate videos into in 2026: CPM data, audience sizes, prioritization framework by content type, and full AI dubbing cost breakdown.