Video localization vs. translation vs. dubbing comes down to depth of adaptation: translation converts words, dubbing converts audio, and localization adapts the entire cultural experience. As of 2026, AI dubbing platforms have shifted the economics — making professional-quality native-language audio accessible to any team, at any budget.

Video Localization vs Translation vs Dubbing — comparison guide for 2026

Video Translation: The Foundation

Video translation is the process of converting spoken or written text in a video from one language to another, typically delivered as subtitles or captions. It focuses on linguistic accuracy without altering the original audio or visual experience.

Video translation has been the standard approach to multilingual content for decades, largely because it requires no changes to the original production assets. The source video remains unchanged — only a text layer is added. This simplicity makes translation the fastest and most affordable entry point into multilingual content, but it comes with significant trade-offs in viewer engagement and information retention.

What video translation produces

- Subtitles: Text synchronized with the spoken audio, displayed at the bottom of the screen

- Closed captions (CC): Subtitles plus non-speech audio descriptions like sound effects and music cues

- Translated scripts: Written translations that serve as the basis for dubbing or voiceover recording

- On-screen text: Translated titles, lower-thirds, annotations, and graphics overlays

When translation alone is the right choice

Translation suits content where immersion is secondary: internal documents, news clips, event recordings, and educational lectures where viewers expect to read along. Translation's key limitation is split attention — viewers must read while watching, dividing cognitive load between visual content and text processing. A CSA Research study found that 72% of consumers spend more time on websites available in their native language. For tutorials or product demos where hands-on follow-along is required, subtitles set a measurable ceiling on effectiveness and task completion rates.

Translation also works well for content with low rewatch value or short shelf life — weekly meeting recordings, one-off webinar archives, and compliance videos. The cost advantage (typically $2–$10 per minute with human translators) makes it the default for high-volume, low-stakes content. Research on e-learning completion rates shows subtitle-only courses average 40–55% completion in non-native audiences, compared to 70–85% for dubbed equivalents — a gap that widens for content requiring skill application or behavioral change.

Video Dubbing: The Audio Experience

Video dubbing replaces a video's original spoken audio track with a new recording in the target language. Dubbing has historically been preferred in Germany, France, Italy, and Spain, where audiences expect native-language audio as the default. Traditional studio dubbing for a feature film cost $100,000–$300,000 per language. AI dubbing has reduced this to fractions of a cent per word with turnaround in minutes rather than months.

Modern AI dubbing replaces the original audio track with synthesized voice in the target language — at a fraction of the cost and time of traditional studio dubbing.

The dubbing market has grown significantly since 2020, driven by streaming platforms expanding into non-English markets and YouTube creators recognizing that multilingual content unlocks exponential audience growth. Netflix alone invested over $1 billion in dubbing and subtitling in 2023, signaling that even the largest content companies treat multilingual audio as a competitive necessity rather than an optional add-on.

Types of dubbing

| Type | Description | Common Use Case |

|---|---|---|

| Simple voiceover | New voice plays alongside or over the original | Documentaries, interviews, corporate presentations |

| Full replacement dubbing | Original audio completely replaced with translated recording | Movies, TV shows, YouTube videos |

| Lip-sync dubbing | New recording timed to match visible lip movements | High-quality video content, influencer videos, branded content |

| AI dubbing | Automated translation + voice synthesis with optional lip-sync | Scalable content localization at any volume |

What dubbing achieves that subtitles cannot

68% of consumers prefer watching a video in their native language without reading subtitles, according to Wyzowl. Dubbed video outperforms subtitled video on watch time, completion rate, and conversion because listening and watching happen simultaneously. For tutorials where viewers need to follow along with on-screen actions, dubbed audio frees their eyes to focus on the demonstration. This advantage compounds for longer content — a 30-minute training video with subtitles experiences steep drop-off after minute 8, while dubbed versions maintain engagement throughout.

For YouTube creators, this difference translates directly to higher average view duration, which the algorithm rewards with increased impressions and recommendations to new viewers. Dubbing effectively removes the language barrier that previously capped a channel's growth at the size of its source-language audience.

Video Localization: The Complete Package

Video localization adapts a video's entire content experience — language, audio, on-screen text, cultural references, visual elements, and brand messaging — to feel native to a specific target market. The distinction from dubbing matters because trust and conversion correlate directly with perceived cultural fit, not just language match.

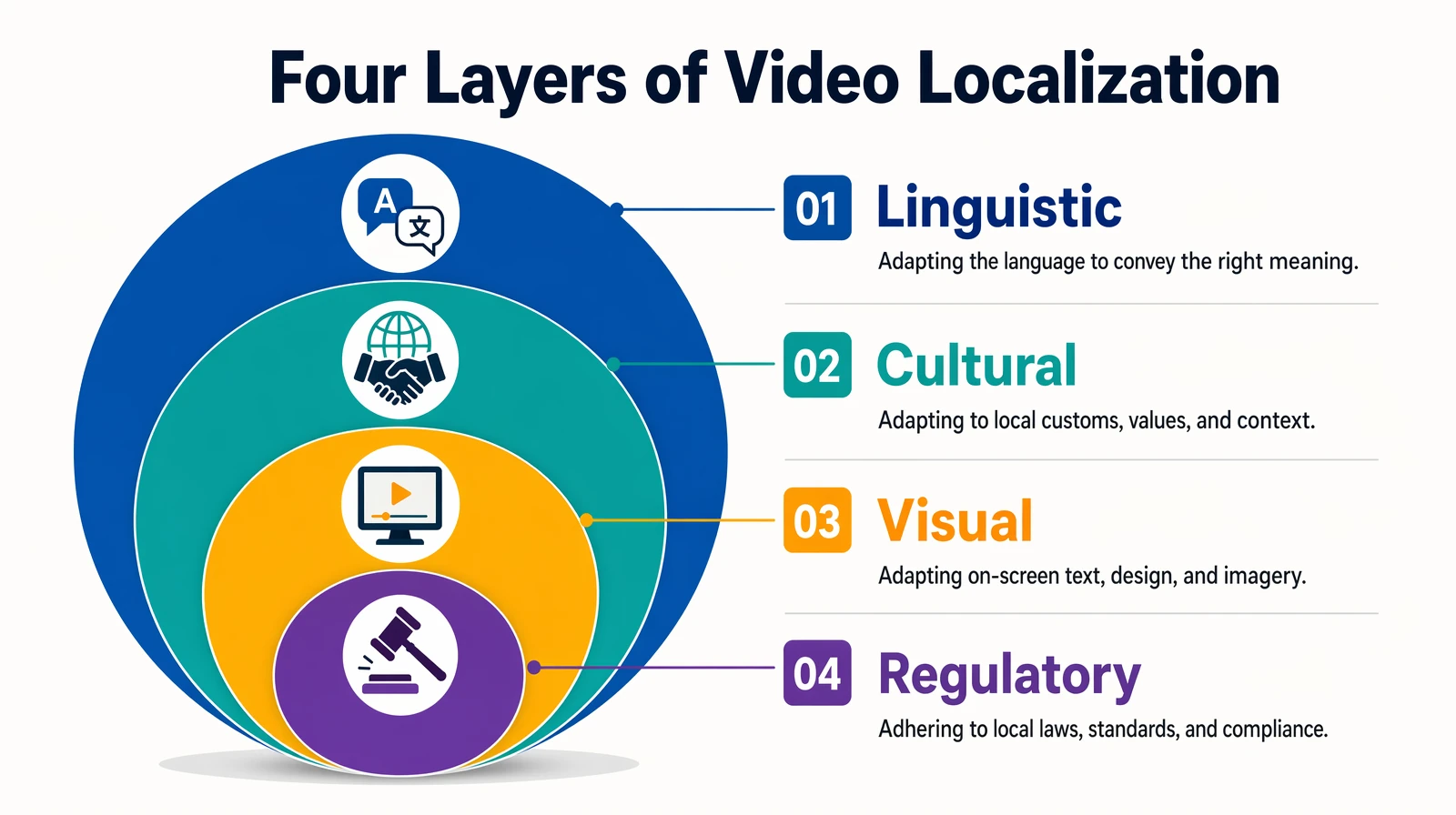

Full localization adapts four layers — language, culture, visuals, and regulatory elements — so video feels made for the target market, not just translated into it.

Localization encompasses four layers of adaptation:

- Linguistic adaptation: Regional dialect, formality level, and idiomatic expressions

- Cultural adaptation: Humor, metaphors, examples, and social conventions

- Visual adaptation: On-screen text, logos, currency symbols, date formats, and measurement units

- Regulatory adaptation: Required disclosures, restricted claims, and market-specific compliance

When localization is the right investment

Localization is justified when the audience needs to feel the content was made for them: marketing campaigns entering a new market, brand-building video for product launches, e-commerce product videos, healthcare and financial content with regulatory requirements, and entertainment where cultural resonance drives success. Most business content falls between pure translation and full localization — a hybrid called "transcreation" that adapts the most culturally-sensitive elements while keeping the core structure intact.

The ROI of localization is highest for markets where cultural distance from the source content is greatest. A US-produced video entering Japan or Saudi Arabia benefits far more from full localization than the same video entering the UK or Australia, where cultural references already overlap. Teams should map target markets on a cultural distance scale to determine how much adaptation each market truly requires — over-localizing wastes budget, while under-localizing wastes the opportunity to build genuine market trust.

Comparison: Translation vs. Dubbing vs. Localization

| Feature | Translation (Subtitles) | Dubbing | Full Localization |

|---|---|---|---|

| Audio | Original unchanged | Replaced in target language | Replaced + culturally adapted |

| On-screen text | New subtitle layer added | Original usually unchanged | Fully adapted (text, graphics, logos) |

| Cultural references | Translated literally | Translated literally or adapted | Adapted or replaced for target culture |

| Viewer experience | Read while watching | Listen in native language | Fully native experience |

| Immersion level | Low | High | Very High |

| Production complexity | Low | Medium | High |

| Typical cost per minute (human) | $2–$10 | $50–$150 | $150–$500+ |

| Typical cost per minute (AI) | $0.02–$0.10 | $0.05–$0.50 | Varies (AI + human hybrid) |

| Turnaround time (per video) | Hours to 1 day | Days to weeks (human) / minutes (AI) | Weeks to months (human) |

| Best for | Low-budget, internal content | YouTube, training, e-learning, support | Marketing campaigns, brand launches |

Verdict: Which approach is best for most teams?

For most teams in 2026, AI dubbing offers the best balance of immersion, cost, and speed — delivering the viewer experience of professional dubbing at a cost comparable to subtitling. Full localization remains the right choice when cultural resonance is critical to brand trust or regulatory compliance. Translation-only is best reserved for internal content or contexts where audiences are accustomed to subtitles.

The practical recommendation is to start with AI dubbing for your highest-traffic content, measure the engagement lift across dubbed languages, then use that data to justify expanding to full localization for markets where the numbers support it. This staged approach minimizes upfront risk while building the internal expertise and workflows needed for larger-scale multilingual operations.

Cost Breakdown: How Much Does Each Approach Cost?

Understanding the true cost of each approach requires looking beyond per-minute rates to consider project-level economics. A single video translated with subtitles costs very little, but the same video professionally dubbed with voice talent, studio time, and quality assurance multiplies the investment by 10–50x depending on language pair and content type.

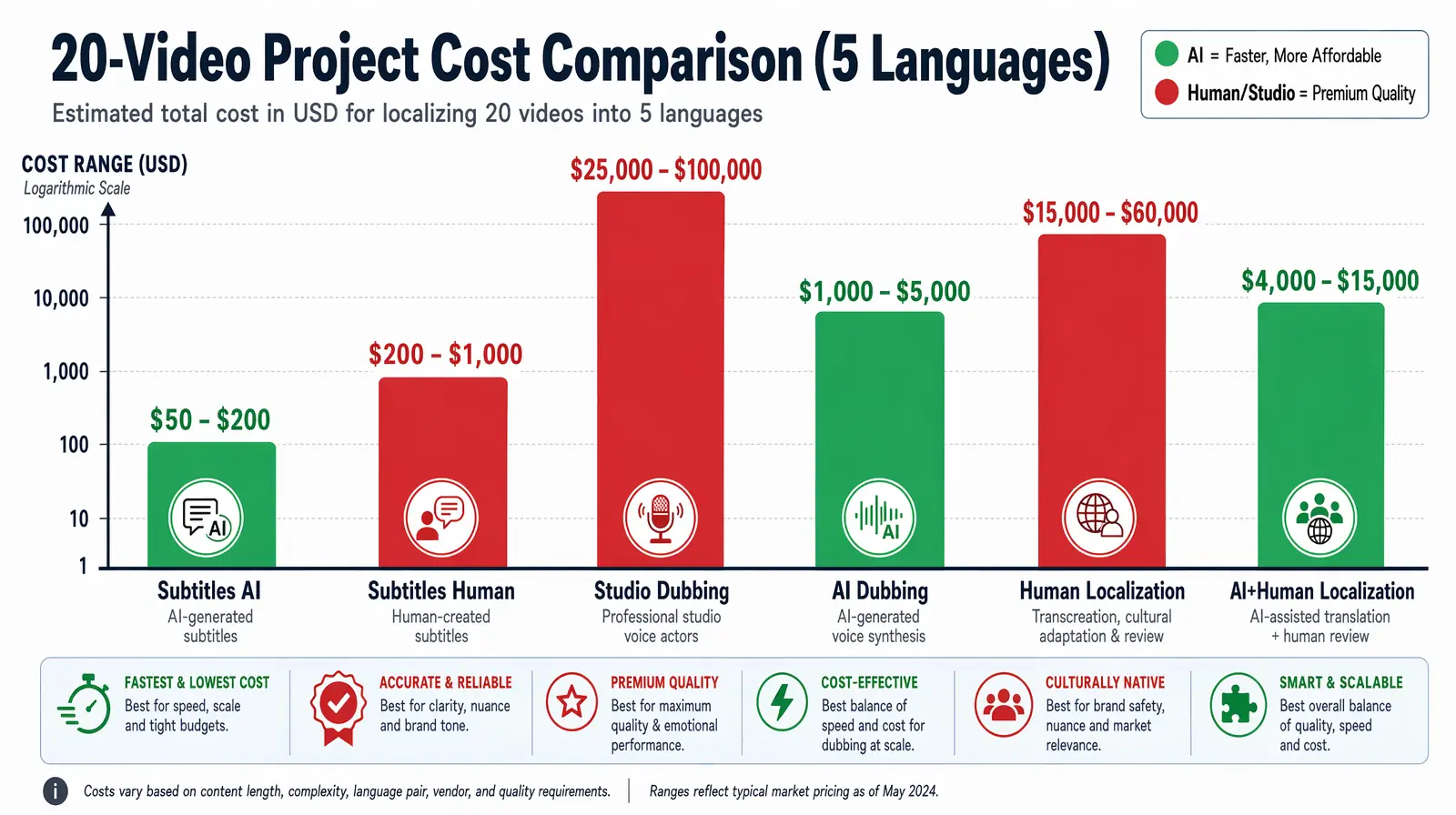

Realistic project-level cost ranges for translating, dubbing, or fully localizing a 20-video library across 5 languages — AI dubbing is the clear cost leader.

Realistic estimates for a 20-video project averaging 5 minutes per video, localized into 5 languages:

| Approach | Method | Cost Estimate | Turnaround |

|---|---|---|---|

| Subtitles only | Human translation | $1,000–$5,000 total | 2–5 days |

| Subtitles only | AI auto-caption | $0–$200 total | Hours |

| Dubbing | Traditional studio | $25,000–$75,000 total | 6–12 weeks |

| Dubbing | AI dubbing (VideoDubber) | $450–$2,500 total | 1–2 days |

| Full localization | Human agency | $75,000–$200,000+ total | 3–6 months |

| Full localization | AI + human hybrid | $5,000–$25,000 total | 2–4 weeks |

AI dubbing reduces per-language-per-minute cost by more than 95% compared to traditional studio dubbing, according to industry pricing benchmarks across platforms including VideoDubber, Murf, and ElevenLabs. For a typical YouTube channel producing 4 videos per month, AI dubbing into 5 languages adds less than 10% to existing production costs while potentially doubling total addressable audience.

The cost efficiency becomes even more pronounced at scale. Processing 100 videos through AI dubbing costs roughly what 3–4 videos would cost with traditional studio methods. This makes previously impossible library-wide localization feasible for independent creators and mid-size businesses, not just enterprise content teams with six-figure budgets.

Budget allocation guide

- Under $500/month: AI subtitles + manual review of top 5 highest-traffic videos

- $500–$2,000/month: AI dubbing for top-performing content in 3–5 languages

- $2,000–$10,000/month: AI dubbing for full active library + human review for compliance-critical content

- $10,000+/month: AI dubbing at scale + human localization for marketing and brand content

For most teams beginning a multilingual strategy, the $500–$2,000 tier delivers measurable ROI within the first month by unlocking audiences that previously bounced due to language barriers. The key insight is that AI dubbing has collapsed the cost curve enough that budgets previously spent on one language now cover five or more languages simultaneously.

Choosing the Right Strategy for Your Content

The choice depends on four factors: audience size, content type, budget, and required immersion level. The matrix below maps common content types to the recommended approach based on how audiences consume each format and what engagement outcomes matter most.

| Content Type | Recommended Strategy | Why |

|---|---|---|

| YouTube videos / vlogs | AI Dubbing | Drives watch time and subscriber growth |

| Online courses / e-learning | AI Dubbing + subtitles | Learner comprehension improves with native audio |

| Corporate training videos | AI Dubbing + glossary | Employee retention requires language match |

| Customer support / how-to | AI Dubbing | Follow-along tasks work better when viewers listen |

| Marketing campaigns | Localization (AI + human) | Brand trust requires more than language conversion |

| Internal communications | AI Dubbing (voice clone) | Speaker recognition builds trust |

| Documentary / interview | Simple voiceover or subtitles | Original voice intended to be preserved |

Teams that start with AI dubbing on their top 20% of content by traffic see the most measurable ROI before scaling to a broader library. Prioritize videos with high international traffic share, evergreen shelf life, and strong conversion intent. YouTube Studio and similar analytics platforms show geographic viewer distribution — use this data to identify which languages deliver the highest marginal return per dollar spent on dubbing.

How AI Dubbing Changes the Localization Equation

AI dubbing combines speech-to-text transcription, neural machine translation, text-to-speech synthesis, and optional lip-sync correction into an automated end-to-end process. Platforms like VideoDubber support 150+ languages and generate a fully dubbed video in 5–15 minutes rather than the 4–8 weeks a traditional studio requires. This speed difference fundamentally changes multilingual strategy — teams can publish simultaneously in multiple languages on the same day as the original.

The key technological advances include large language models trained on multilingual parallel corpora, neural voice synthesis matching prosody and emotion across languages, and real-time lip-sync algorithms that adjust both timing and visual frames. Modern platforms use voice cloning to preserve the speaker's tonal characteristics across all target languages.

In a 2024 Wyzowl survey, 73% of respondents said they would watch more video content from a creator if it were available in their native language — representing substantial untapped reach for any creator not yet publishing multilingual content. Combined with YouTube's algorithmic preference for high watch-time content across markets, AI dubbing creates a compounding growth loop: more languages lead to more watch time, which leads to more algorithmic distribution, which leads to more subscribers across every language served.

Lip-Sync and Voice Cloning Explained

Two features separate basic AI dubbing from professional-grade results: lip-sync correction and voice cloning. Without these technologies, dubbed content can feel robotic or disconnected from the visual — triggering the "uncanny valley" effect that causes viewers to disengage. With them, the dubbed version becomes virtually indistinguishable from native-language production for most informational and educational content.

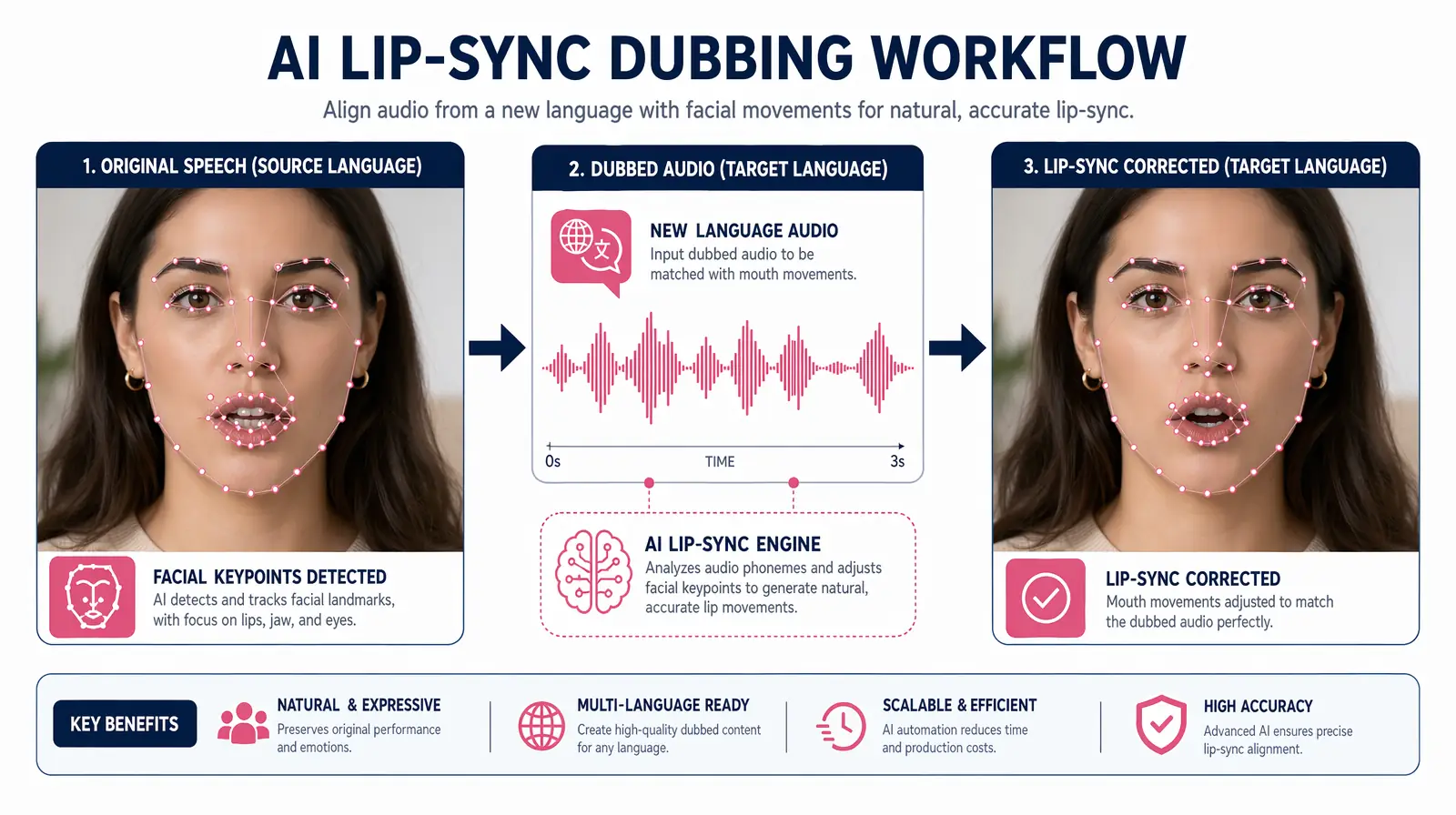

Modern lip-sync AI analyzes facial keypoints frame-by-frame and regenerates mouth movements so the dubbed audio matches what viewers see on screen.

Lip-Sync Correction

Lip-sync correction adjusts synthesized speech timing — and in advanced implementations, the visual video itself — to match the mouth movements of the on-screen speaker. Modern lip-sync AI analyzes the new audio waveform alongside the speaker's facial keypoints frame by frame, applying timing and subtle facial animation corrections. See how lip-sync AI works in video translation for a technical deep-dive.

Voice Cloning

Voice cloning captures a speaker's unique vocal characteristics — pitch, rhythm, articulation, and emotional coloring — and replicates them in a synthesized voice across target languages. This means the same presenter can "speak" Spanish, Japanese, or Arabic while sounding recognizably like themselves. VideoDubber supports:

- Instant voice cloning: Adapts style and tonality from the original speaker's audio — no separate sample required

- Pro+ custom cloning: Upload a clean 30–60 second audio sample for higher fidelity and expressiveness

| Feature | Without Voice Cloning | With Voice Cloning |

|---|---|---|

| Speaker identity | Generic AI voice | Sounds like the original speaker |

| Brand consistency | Low | High |

| Emotional authenticity | Generic | Preserved |

| Best use case | Anonymous narration, how-to content | Named presenters, leadership content, creator channels |

Common Mistakes When Choosing a Localization Strategy

Teams making their first multilingual expansion commonly fall into predictable traps. Awareness of these mistakes can save thousands of dollars and months of suboptimal performance.

-

Defaulting to subtitles because they're cheaper upfront — Subtitles generate less engagement than dubbed alternatives. For high-value content, dubbing ROI often exceeds cost within weeks.

-

Paying for full studio dubbing when AI would suffice — For YouTube, training, and e-learning, AI dubbing at $0.05–$0.50 per minute achieves comparable satisfaction to studio dubbing at $50–$150 per minute.

-

Treating localization as a one-time project — Video content evolves with product updates. A localization strategy needs a versioning workflow from day one.

-

Not considering voice cloning for brand content — Generic AI voices lose speaker identity and brand authority.

-

Under-investing in high-stakes markets — The incremental cost of adding one more language to an AI dubbing job is typically a few dollars per video.

Step-by-Step Workflow: From One Video to Many Languages

Complete workflow using VideoDubber as the example platform. This process applies whether you are dubbing a single video or batch-processing an entire content library across multiple target languages.

Six-step workflow from strategy and master prep through generation, review, and multilingual publishing on YouTube or LMS platforms.

- Determine Strategy — Decide: subtitles only, AI dubbing, or AI dubbing with a localization review pass. For most content, AI dubbing is the optimal starting point.

- Prepare the Master — Ensure clean audio with primary speaker clearly audible. Keep pacing at 80–120 words per minute for optimal translation expansion. Export at highest available quality.

- Upload and Configure — Set number of speakers, select target languages (150+ available), choose voice cloning options, and attach a glossary for brand terminology that should not be translated.

- Generate Dubbed Versions — Processing takes 5–15 minutes for videos under 30 minutes. Returns separate video files per language with dubbed audio, optional subtitles, and SRT files for accessibility.

- Review Key Sections — Play the first 30 seconds, a middle section, and the CTA of each dubbed video. Verify terminology, tone, and pacing. Flag sections for correction using the timeline editor.

- Publish — Upload language-specific versions to YouTube, your LMS, or website. Tag with language metadata for discoverability and international SEO.

Teams that adopt this workflow report that the review step takes more time than all other steps combined — reinforcing that the AI handles the heavy lifting while humans focus on quality assurance for brand-critical moments. Building a review checklist specific to your content type (brand terminology, tone requirements, compliance language) accelerates this step significantly after the first few videos establish a pattern.

For related workflows, see how to translate training and internal videos at scale and the overview of top languages to prioritize for video translation.

Frequently Asked Questions

The following questions represent the most common concerns from teams evaluating video localization, translation, and dubbing for the first time.

What is the difference between video localization and video translation?

Video translation converts dialogue into another language as subtitles or a dubbed script. Video localization goes further — adapting cultural references, visuals, dates, prices, and tone to feel native to the target market. All localization includes translation, but not all translation constitutes localization. The distinction matters because localization addresses trust and purchase intent at a level subtitles alone cannot achieve.

Is dubbing better than subtitles for YouTube videos?

For most YouTube content, dubbing delivers higher watch time, completion rate, and subscriber conversion. Viewers engage more deeply when they hear content in their native language without reading. The exception is content where the creator's original voice is part of the experience — subtitles preserve authenticity there.

How accurate is AI dubbing compared to professional human dubbing?

Modern AI dubbing achieves 85–95% of the perceived quality of professional human dubbing for standard informational content. For tutorials and product demos, many viewers cannot reliably identify AI-dubbed audio in blind tests. The gap narrows further with voice cloning and lip-sync correction enabled.

Can I localize a video without re-shooting it?

Yes — AI dubbing and localization tools work entirely in post-production. Upload your master video and the platform generates dubbed versions without re-recording or re-shooting any footage.

How many languages can AI dubbing support?

VideoDubber supports 150+ languages, including Spanish, Hindi, Portuguese, German, French, Japanese, Korean, Arabic, Indonesian, and Swahili.

Is video localization worth the extra cost over subtitles?

For marketing and brand content entering a new market, localization is almost always worth it. CSA Research found that 75% of consumers are more likely to purchase when information is in their native language. Subtitles drive awareness; localization drives conversion and long-term brand trust in the target market.

What is the fastest way to localize a large video library?

Use VideoDubber with batch processing. Upload the top 20% of videos by traffic with target languages and glossary pre-configured. For 50+ video libraries, this produces fully dubbed multilingual versions within a single business day. Prioritize evergreen content first — these videos continue generating returns across languages for months or years after the initial dubbing investment, making them the highest-ROI candidates for early localization.

Summary: Translation, Dubbing, and Localization — Know Your Tools

- Video translation converts words into subtitles or a script. Fast and inexpensive but splits viewer attention and limits engagement on longer content.

- Video dubbing replaces the audio track with a translated recording. Creates a native listening experience and significantly improves watch time, completion rate, and conversion.

- Video localization adapts the entire experience — language, audio, cultural references, and visuals — for a target market. Highest investment and impact for brand-critical content.

- AI dubbing has made professional-quality dubbing accessible to any creator, reducing cost by 95%+ and turnaround from weeks to minutes. VideoDubber with voice cloning and lip-sync delivers results previously achievable only in a recording studio.

- For most YouTube, training, and e-learning content, AI dubbing is the best starting point — delivering immersion at a cost that makes multilingual publishing viable at any scale.

Upload your video to VideoDubber and see the difference dubbing makes →

Further Reading

Gemini vs. DeepSeek vs. GPT for Video Translation: Which AI Wins in 2026?

Gemini vs DeepSeek vs GPT for video translation: 2026 benchmarks, dubbing scripts, subtitles, and language accuracy. Pick the right AI model for your content.

Manual vs AI Video Translation: Cost, Speed & Quality Compared (2026 Guide)

Manual vs AI video translation compared: AI costs $0.09/min vs $20–$180/min manually. Cost, speed, quality, and voice cloning breakdown for 2026.

How to Use GPT-5.2 for Video Translation [2026 Guide]

How to use GPT-5.2 for video translation in VideoDubber: step-by-step, model comparison, context box tips, cost guide, and best practices for European languages. 2026.

How to Use Gemini for Video Translation [2026 Guide]

How to use Gemini for video translation: complete 2026 guide. Step-by-step in VideoDubber, Asian-language strength (Japanese, Korean, Hindi), multimodal context, and when to pick Gemini vs GPT or DeepSeek.

How Accurate Is AI Video Translation? Benchmarks, Data & Real Examples (2026)

How accurate is AI video translation in 2026? WER benchmarks, language accuracy tiers, cost data, and real-world examples—complete guide with data.